What Is Big Data? Everything You Need to Know

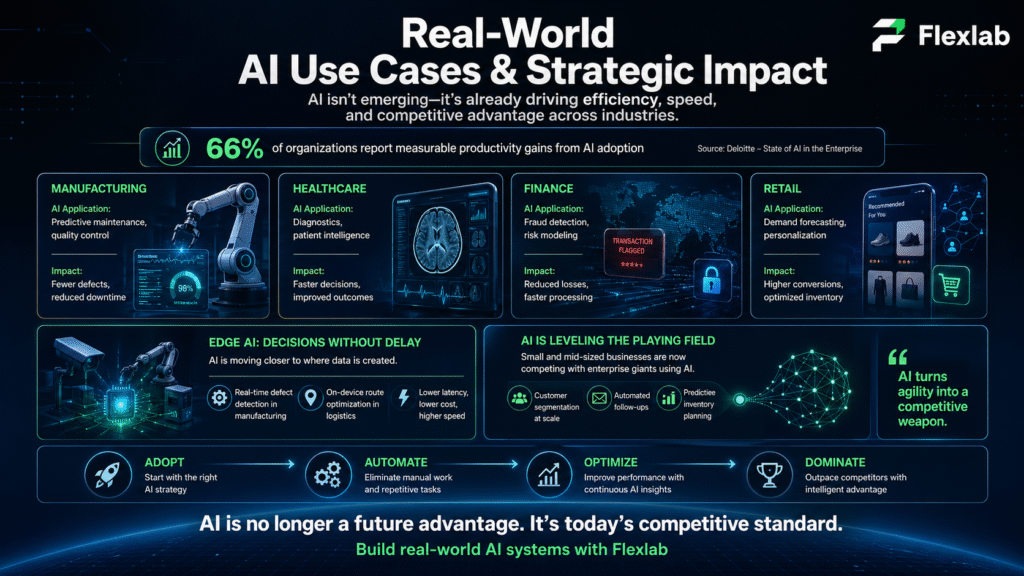

AI Automation | AI Applications | AI Tools

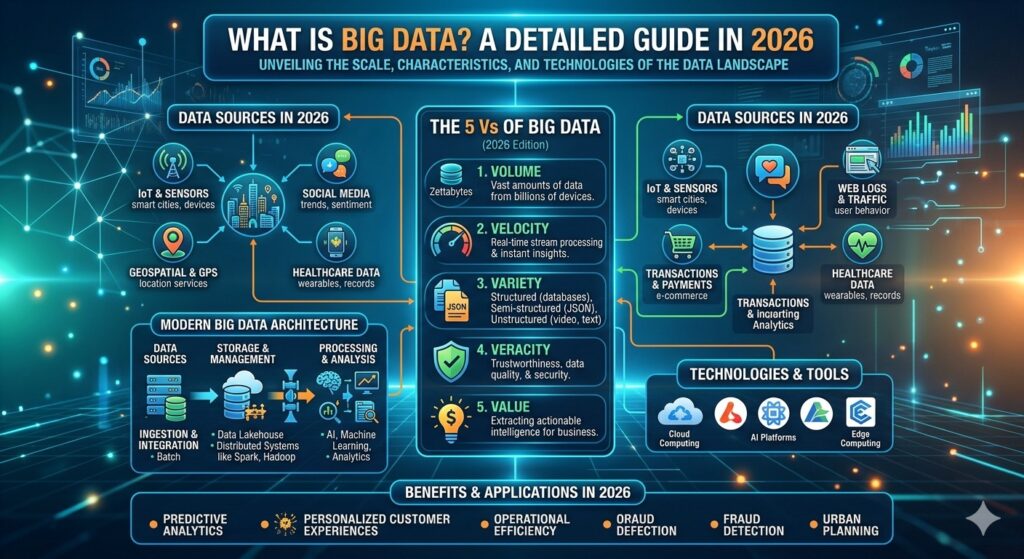

What is big data, and why do we need modern solutions? Every day, businesses generate 2.5 quintillion bytes of information, from customer clicks and IoT sensors to financial transactions and social feeds. These solutions are the powerful technologies that capture this overwhelming volume, process it at lightning speed, and transform chaos into actionable insights that drive revenue, cut costs, and crush competition.

Whether you’re fighting fraud in finance, personalizing retail experiences, predicting hospital patient outcomes, or optimizing manufacturing lines, it bridges the gap between raw information overload and strategic advantage. Do you want to learn more? Let’s dive into detailed insights below.

What is Big Data?

It refers to massive, complex, and rapidly growing large-scale information that cannot be easily managed and analyzed with traditional database systems. It encompasses both structured information (e.g., a list of financial transactions or an inventory ledger) and unstructured information (e.g., social media posts or videos). Additionally, it includes mixed records, such as those used to train large language models. Moreover, these records may include anything, from a work of Shakespeare to a company’s budget spreadsheet for the last 10 years.

However, managing and extracting information requires advanced technologies that conventional processing methods cannot handle at the scale, speed, and complexity involved. Businesses can analyze patterns, detect anomalies, and make more precise forecasts with the right technologies. Hence, it will help refine strategies, reduce operational inefficiencies, and improve customer experience.

For instance, big data tools like data lakes ingest, process, and store structured, unstructured, and semi-structured information in its native format. It is essential for handling its large volume, variety, and velocity while supporting advanced AI predictive analytics, machine learning techniques, and AI and advanced integration systems.

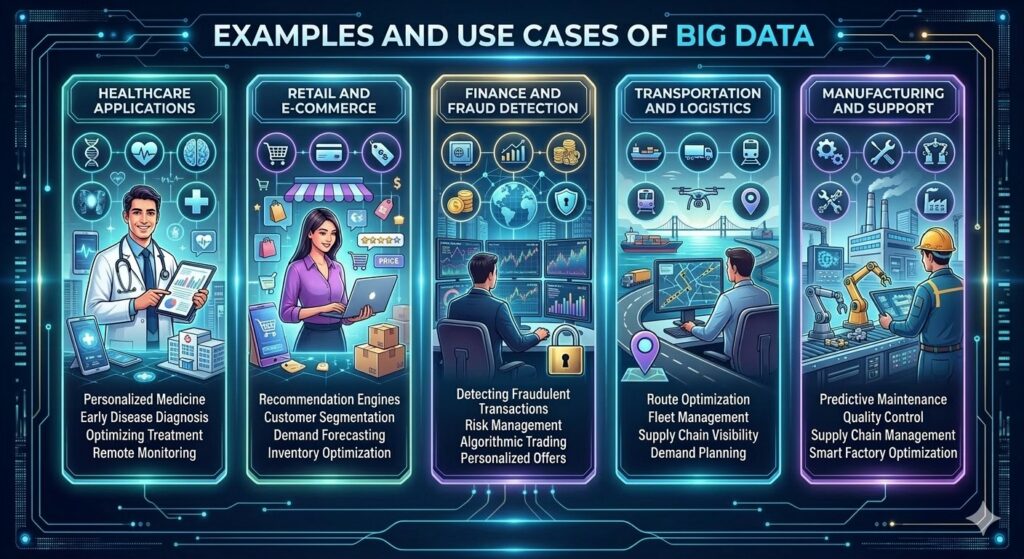

Examples and Use Cases

It empowers transformation across many industries, including;

- Healthcare Applications

- Retail and E-Commerce

- Finance and Fraud Detection

- Transportation and Logistics

- Manufacturing and Support

Healthcare Applications

The healthcare industry leverages it and NLP to analyze unstructured information, such as clinical notes and lab results, to accelerate drug development and enable personalized treatments. Moreover, hospitals use it to predict patient outcomes and track epidemics.

Retail and E-Commerce

Retailers also use it to analyze purchase history and browsing patterns to deliver hyper-personalized recommendations, market segmentation, and optimize inventory. For instance, Amazon and Walmart leverage it. Hence, it boosts sales through dynamic pricing and customer segmentation.

Finance and Fraud Detection

It is vital in financial services for fraud detection and risk management. Banks monitor transaction patterns in real-time against historical records to identify fraud instantly. As a result, it reduces losses. It also enables risk assessment and the development of tailored financial products.

Transportation and Logistics

Companies optimize routes using traffic patterns, GPS, and demand forecasting to improve efficiency in last-mile delivery and fleet management. Uber uses it for surge pricing and predictive maintenance.

Manufacturing and Supply Chain

IoT sensors enable predictive maintenance, energy optimization, and supply chain optimization using satellite and geospatial records. This minimizes downtime and supports sustainability tracking.

Other Key Sectors

In agriculture, sensors and satellites guide crop yields; sports analytics enhance performance; and governments improve public services, such as traffic management. Google’s ecosystem has a broad impact across search, ads, and maps.

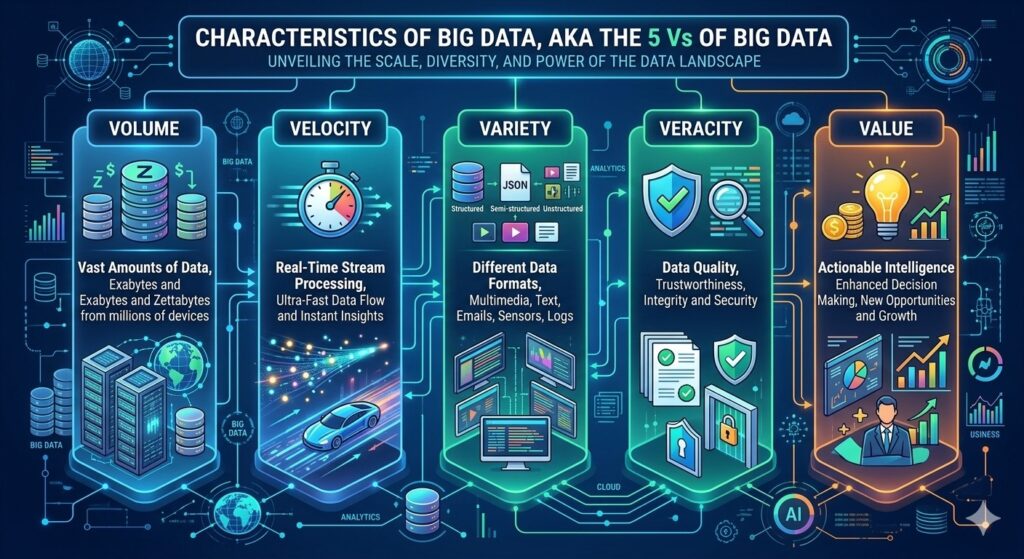

Characteristics of Big Data, AKA the 5 V’s

It describes the core characteristics that differentiate advanced analytics from traditional records and explains what it takes to manage it effectively. Here are the 5 V’s;

- Volume

- Velocity

- Variety

- Veracity

- Value

Volume

It is “big” because of the large amount of information generated every second from web applications, IoT devices, transaction systems, and more. As a result, traditional storage and processing tools often struggle at this scale, making it difficult for organizations to capture and retain what they need.

Fortunately, modern platforms, especially cloud-based storage and distributed systems, now help store, process, and protect these rapidly growing datasets so that important information isn’t lost.

Velocity

Velocity is the speed at which information is created, collected, and processed into a system, and it is extremely high in these scenarios. It arrives continuously and quickly at millisecond speeds, from real-time social feeds to streaming sensor streams or high-frequency trading records.

To keep up, organizations rely on stream-processing frameworks and in-memory technologies to ingest, analyze, and respond to data in near-real time. As a result, it enables faster, advanced decision-making.

Variety

Variety captures the wide range of formats that advanced analytics can take. Beyond traditional structured tables, it includes unstructured information such as text, audio, images, and video, as well as semi-structured formats such as JSON or XML that have some organization but no fixed schema.

However, handling this mix requires flexible architectures, such as NoSQL databases, data lakes, and schema-on-read approaches, that can store, combine, and analyze multiple types in a single environment.

Veracity

Veracity refers to how trustworthy, accurate, and consistent the data is. Because it comes from many different sources and at high speed, it often contains duplicates, inconsistencies, missing values, or outright errors that can distort insights.

Organizations, therefore, need robust quality practices, including cleaning, validation, and verification, to filter out noise and ensure analytics and models are built on reliable data.

Value

Value is the business impact organizations can extract from information. When managed and analyzed effectively, it can improve operations, enhance customer experiences, uncover new revenue streams via predictive modeling, and reveal emerging risks or opportunities.

Furthermore, using advanced analytics, machine learning, and AI, companies turn raw, complex information into actionable insights that drive smarter strategies and measurable outcomes.

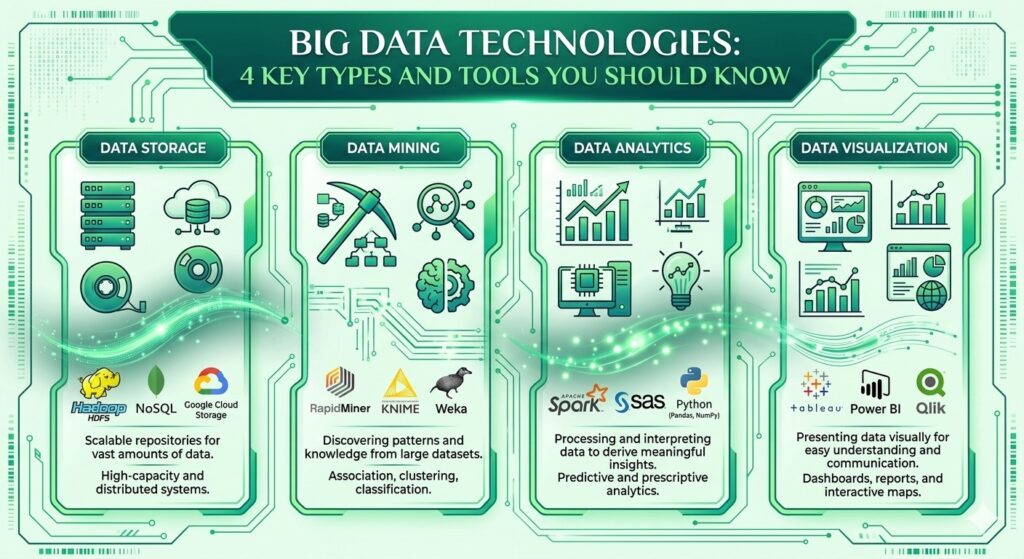

Big Data Technologies: 4 Key Types and Tools You Should Know

These technologies are usually grouped into four core categories:

- Data Storage

- Data Mining

- Data Analytics

- Data Visualization

Each category includes specialized tools that help you capture, manage, and turn large, complex large-scale information into insights that drive decisions. Let’s read about types of big data in detail:

1. Data Storage

These technologies are designed to fetch, store, and manage large volumes of structured and unstructured data. For instance, they provide scalable infrastructure that keeps information accessible and seamlessly integrated with other systems. Popular tools for data storage include Apache Hadoop and MongoDB, which excel in handling massive datasets.

-

Apache Hadoop

Apache Hadoop is an open-source framework for storing and processing it across clusters of commodity hardware. Moreover, its distributed architecture enables parallel processing, along with faster handling of very large datasets and support for multiple formats. As a result, it’s known for its fault tolerance, scalability, and suitability for both batch processing and long-term storage.

-

MongoDB

MongoDB is a popular NoSQL database built to handle large volumes of semi-structured or unstructured data. Specifically, it stores information in flexible, JSON-like documents grouped into collections, which makes it easy to evolve schemas over time. Because it scales horizontally and handles varied data types, MongoDB is widely used for high-velocity applications, content platforms, and real-time analytics backends.

2. Data Mining

It focuses on extracting useful patterns, correlations, and trends from raw data. For example, these technologies help turn both structured and unstructured data into actionable information. Key tools used for mining include RapidMiner and Presto, which streamline complex analysis processes.

-

RapidMiner

RapidMiner is a data mining and machine learning platform for building end-to-end predictive models. Specifically, it supports data preparation, feature data engineering, model training, and evaluation in a single environment, along with low-code workflows. As a result, organizations use it to operationalize advanced analytics and AI automation across use cases like churn prediction, scoring, and risk analysis.

-

Presto

Presto is an open-source, distributed SQL query engine created to run interactive analytics on very large datasets. Moreover, it can query data where it lives across data lakes, warehouses, and other systems, while joining multiple sources in one statement. Therefore, this makes it well-suited for ad hoc exploration and fast analytics without heavy data movement.

3. Big Data Analytics

Big data analytics tools, such as Apache Spark and Splunk, clean, transform, and model data to support business decisions. For instance, after mining and preparing the data, these technologies enable advanced queries, algorithms, and predictive analytics at scale.

-

Apache Spark

Apache Spark is a widely used big data analytics engine known for its speed and in-memory processing. Unlike traditional MapReduce, Spark keeps data in RAM when possible, which makes iterative workloads and complex analytics significantly faster. Moreover, it supports batch processing, streaming, machine learning, and graph processing through a unified framework.

-

Splunk

Splunk specializes in analyzing machine-generated data such as logs, metrics, and event streams. Specifically, it ingests large volumes of data, indexes it, and provides search, dashboards, alerts, and reports. As a result, with built-in support for advanced analytics and AI, Splunk helps teams detect anomalies, troubleshoot systems, and derive operational insights in near real time.

4. Data Visualization

These technologies turn large, complex datasets into charts, dashboards, and stories that stakeholders can quickly understand. For example, they are essential for communicating insights and driving action. Some key tools include Tableau and Looker, which make complex data accessible to all.

-

Tableau

Tableau is a leading visualization platform with a drag-and-drop interface that makes it easy to build dashboards and a wide range of chart types: bar, line, box plots, maps, Gantt charts, and more. Moreover, it connects to many data sources and supports secure sharing, thus enabling teams to explore data interactively and align around shared metrics.

-

Looker

Looker is a modern business intelligence (BI) tool that sits on top of your warehouse and helps define consistent metrics while delivering interactive visualizations. Specifically, using a semantic modeling layer, teams can build reusable definitions and then create dashboards and reports for use cases like monitoring brand engagement, product performance, or customer behavior over time.

How Can Big Data Solutions Improve Business Decisions?

It improves business decisions by replacing intuition and guesswork with evidence-based, real-time insights drawn from large, diverse datasets. Let’s have a look at big data pros and cons:

Better, Faster Decisions

Platforms aggregate data from many sources and analyze it in real time, so leaders can react quickly to changing customer behavior and market conditions. Moreover, this reduces uncertainty and enables faster, more confident choices grounded in facts rather than opinions.

Deeper Customer and Market Insight

Analytics on customer interactions, transactions, and feedback reveals patterns in preferences, churn drivers, and demand trends. As a result, businesses use these insights to refine products, personalize experiences, and spot new opportunities or underserved segments.

Operational Efficiency and Cost Control

Examining process data across operations and supply chains, it highlights bottlenecks, waste, and automation opportunities. Organizations that adopt real-time analytics report faster decision speed and lower operational costs through optimized workflows and resource allocation.

Risk Management and Fraud Detection

It monitors transactions and events at scale to detect anomalies that indicate fraud, failures, or emerging risks. For instance, predictive models help forecast issues before they escalate, allowing proactive interventions instead of reactive firefighting.

Competitive Advantage and Innovation

Data-driven decision-making uncovers hidden patterns and trends that competitors may miss, enabling better product-market fit and smarter strategies. Firms with strong data cultures make decisions faster, innovate more effectively, and are significantly more likely to acquire and retain customers.

11 Big Data Solutions in 2026

These solutions help organizations collect, process, and analyze massive, fast-changing datasets so they can improve efficiency, reduce risk, and unlock new growth opportunities. As reliance on analytics increases, choosing the right mix of technologies becomes critical for accuracy, scalability, and long-term value.

1. Cloud-Based Platforms

Cloud-native platforms provide elastic storage and compute so teams can scale up for heavy workloads and scale down to control costs. For instance, they reduce dependence on on-premises hardware, simplify maintenance, and offer built-in tools for security, monitoring, and analytics. Common use cases include real-time transaction analysis, secure storage of sensitive records, and large-scale risk modeling across global operations.

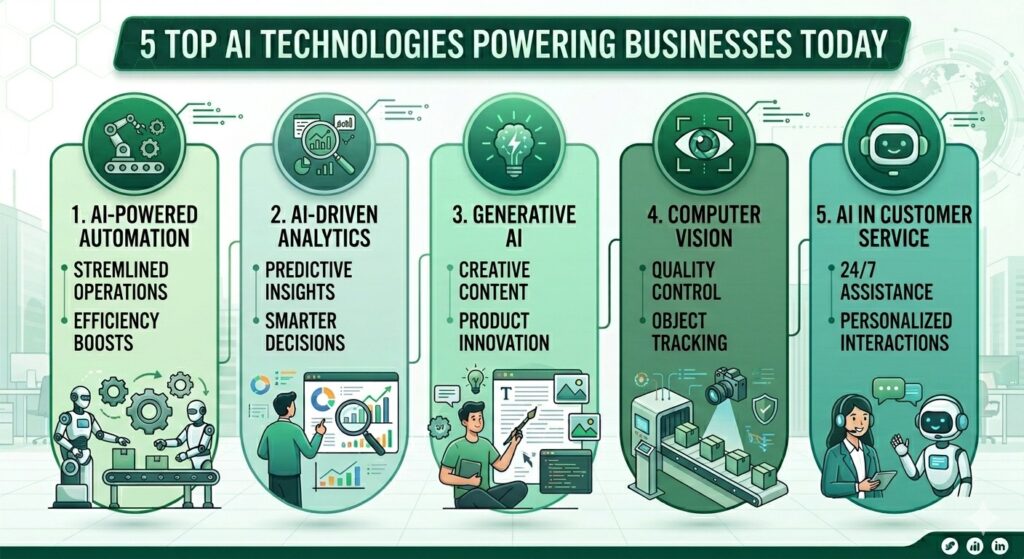

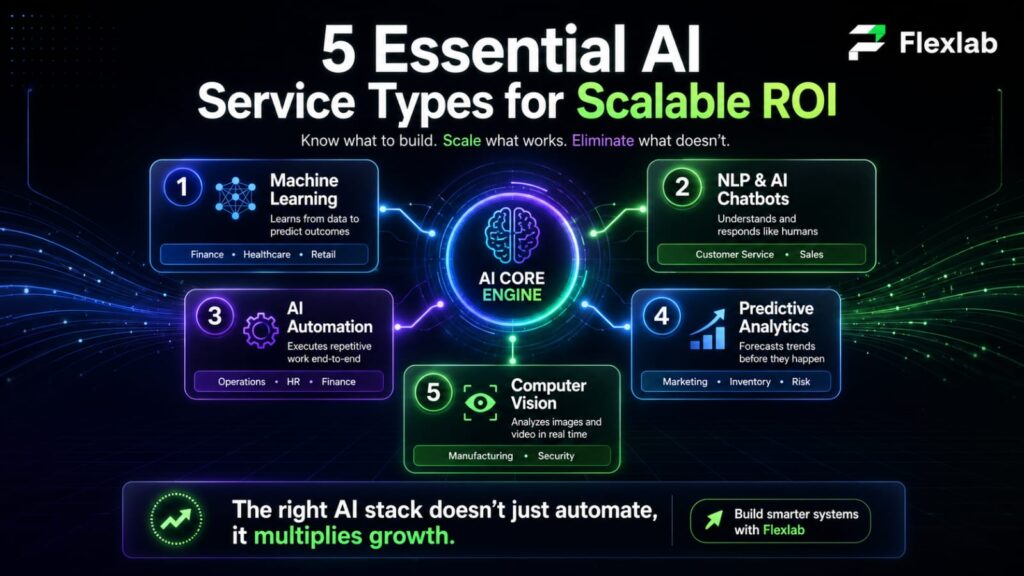

2. AI-Powered Analytics

AI and machine learning enhance big data analytics by automatically detecting patterns, predicting outcomes, and handling unstructured data at scale. Specifically, organizations apply AI to spot fraud, personalize customer journeys, score leads, and automate complex decision workflows. Over time, models learn from new data, thus improving prediction accuracy and making insights more reliable for strategic planning.

3. Edge Computing for Processing

Edge computing processes data close to where it’s generated; on devices, gateways, or local nodes, rather than sending everything to a central cloud. This approach reduces latency, bandwidth usage, and dependence on always-on connectivity. Therefore, industries like manufacturing, logistics, and healthcare use edge analytics to monitor equipment health, optimize routing, and react instantly to critical events such as anomalies in patient vitals or production lines.

4. Data Lakes for Enterprise

Data lakes serve as centralized repositories that store structured, semi-structured, and unstructured data in its raw form. Moreover, this flexible model supports advanced analytics, AI/ML experimentation, regulatory reporting, and cross-team collaboration on shared datasets. By decoupling storage from compute, data lakes let organizations scale cost-effectively while keeping a single source of truth for diverse data types.

5. Blockchain for Data Security and Integrity

Blockchain-based solutions create tamper-resistant ledgers that strengthen trust in critical data and transactions. For example, they are used to track product provenance, secure patient records, and record financial events in an auditable, transparent way. As a result, immutable logs and cryptographic verification help reduce fraud, support compliance, and simplify cross-organizational data sharing without sacrificing security.

6. Data Visualization Tools

Data visualization tools turn complex datasets into intuitive dashboards, charts, and interactive reports. In addition, they enable business and technical teams to monitor KPIs, explore trends, and spot anomalies without deep coding skills. Real-time data processing and self-service visualizations further improve communication across departments and help leadership respond faster to shifts in performance, demand, or risk.

7. Hybrid Data Management

Hybrid data architectures combine on-premises and cloud environments so organizations can balance control, compliance, and scalability. Meanwhile, sensitive or regulated data can remain on-site, while anonymized or aggregated data is analyzed in the cloud using advanced analytics services. This approach supports regional compliance rules, optimizes infrastructure spend, and gives enterprises flexibility as their data driven strategy evolves.

8. Predictive Analytics for Proactive Strategies

Predictive analytics utilizes historical and real-time facts, statistical models, and machine learning to forecast future events and behaviors. As a result, businesses implement these solutions for demand forecasting, churn prediction, risk scoring, pricing optimization, and capacity planning. Hence, by anticipating trends and disruptions early, organizations can adjust strategies proactively. Ultimately, this approach reduces waste and allocates resources more effectively.

9. Automated Data Governance and Compliance

Automated data governance tools continuously classify data, enforce access policies, and track how information is used across systems. Moreover, they help organizations meet regulatory requirements, reduce manual audit overhead, and lower the risk of non-compliance. Key features, like policy-based access control, lineage tracking, and automated reporting, therefore improve transparency and strengthen internal controls.

10. Streaming Analytics for Real-Time Processing

Streaming analytics platforms analyze facts as they’re generated, which enables real-time detection of anomalies, threats, and opportunities. For instance, they are widely used in fraud detection, cybersecurity monitoring, network operations, and real-time customer experience optimization. Consequently, processing events in motion allows businesses to trigger immediate actions, such as blocking suspicious transactions, rerouting deliveries, or updating recommendations.

11. Data-as-a-Service (DaaS) for On-Demand Insights

These solutions deliver curated, ready-to-use datasets via APIs or subscriptions, without requiring heavy internal infrastructure. Organizations augment their own information with external market, financial, location, or behavioral data to improve models and reporting. Therefore, this model lets teams focus on insight generation and smart decision-making, while the provider handles data collection, cleaning, and delivery at scale.

Common Big Data Challenges that Prevent Businesses from Making Data-Driven Decisions

Even though the advantages of data-driven decision-making are well known, many organizations still struggle to put it into practice consistently. Let’s read the challenges below:

Biased and Incomplete Data

Working with biased or partial data is like trying to assess a landscape through a fogged lens; it distorts reality and leads to flawed conclusions. Teams must learn to detect and correct bias, fill data gaps, and use the right tools and processes to ensure their analysis is as complete and accurate as possible.

Difficulty Interpreting Data

Having large volumes of data does not automatically translate into better decisions. Many organizations lack the analytical skills, frameworks, or context needed to turn raw numbers into clear, actionable insights that leaders can trust.

Integration with Existing Systems

New platforms and processes often have to coexist with legacy applications and siloed systems. When data integration is complex or incomplete, it becomes hard to create a unified data view, slowing or blocking the adoption of truly data-driven practices.

Resistance to Change

Moving from gut-driven to large-scale decisions requires a cultural shift. Established habits, organizational silos, and fear of transparency can all create pushback, making it difficult to embed data into everyday decision-making.

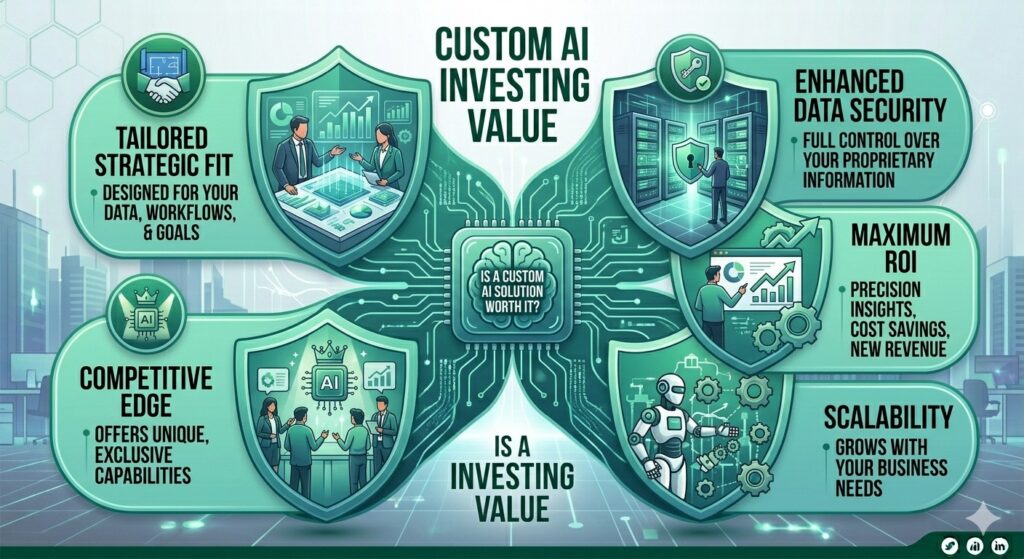

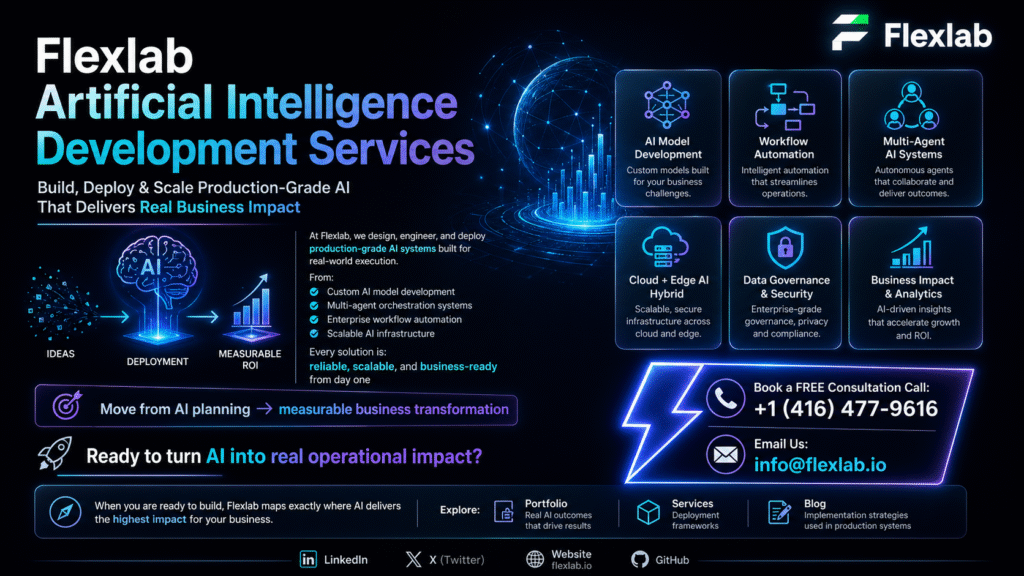

How Flexlab Powers Your Big Data Success

Flexlab transforms the given challenges into competitive advantages through end-to-end AI and blockchain expertise. Our services deploy scalable data lakes, cloud-native platforms, and real-time streaming analytics tailored to your industry.

Are you looking for healthcare NLP for clinical insights, retail personalization engines, or manufacturing IoT predictive maintenance? Everything is covered here in Flexlab. Our team handles everything from Hadoop/Spark cluster optimization and automated governance to custom Tableau/Looker dashboards, so you overcome data silos, bias issues, and integration hurdles while unlocking faster decisions, fraud detection, and revenue growth through production-ready solutions.

Conclusion: What is Big Data?

It transforms massive datasets into actionable insights that drive smarter decisions across healthcare, retail, finance, and logistics. The right cloud platforms, AI analytics, and streaming solutions overcome silos and bias to deliver real-time fraud detection, personalization, and predictive power.

Organizations mastering it to see 30%+ revenue growth and major cost savings. Flexlab deploys production-ready solutions, from Spark clusters to automated governance, that deliver ROI in weeks.

Contact us today for your free consultation and discover exactly how to operationalize everything you’ve learned here. Visit our AI and blockchain blog and discover new helpful insights on the Benefits of AI in Supply Chain, The Role of AI Predictive Analytics, Is AI in Marketing Worth the Investment for Small Businesses, and What are Enterprise AI Solutions.

Ready to Turn your Analytics Knowledge into Business Results?

📞 Book a FREE Consultation Call: +1 (416) 477-9616

📧 Email us: info@flexlab.io

What are big data solutions?

These solutions are integrated technologies and platforms, like cloud data lakes, AI analytics engines, streaming processors, and NoSQL databases, that manage massive, diverse datasets at high speed to deliver actionable business insights, improve decision-making, and drive efficiency across industries.

What tools are used for big data?

Key tools include Apache Hadoop/Spark for processing, MongoDB/Cassandra for NoSQL storage, Kafka/Flink for streaming, BigQuery/Snowflake for analytics warehouses, and Tableau/Power BI for visualization.

How to use big data to make business decisions?

Collect diverse sources into unified platforms, apply AI/ML for pattern detection/prediction, visualize insights via dashboards, and act on real-time alerts. Hence, this approach enables fraud detection, demand forecasting, personalization, and risk mitigation with evidence-based speed.