Most Effective AI Frameworks for Automation in 2026

AI in FinTech | AI Automation Services in 2026 | 11 Best AI Tools

Why do some AI projects scale into real business systems while others never move beyond testing? In 2026, AI frameworks will play a crucial role in determining whether an idea becomes a production-ready solution or remains stuck in experimentation. AI adoption is widespread; however, turning it into reliable, scalable systems is still a major challenge. As a result, many teams struggle to scale effectively.

According to McKinsey, nearly 88% of organizations now use AI in at least one function, yet many fail to scale it across operations. Moreover, industry research shows that a significant portion of AI initiatives never reach production due to gaps in architecture, workflow design, and execution strategy.

This is where AI automation frameworks become critical, as they define how systems connect, operate, and scale in real environments. Choosing the right AI automation tools directly impacts how effectively teams move from idea to real-world deployment. In this article, we break down the most effective AI frameworks for automation in 2026, how they work in real-world systems, and how to choose the right one for your business.

How AI Frameworks for Automation Work in Real Systems

In 2026, building AI systems is about designing structured systems that can handle complexity, adapt to change, and operate reliably over time for real-world AI applications. This shift is exactly why agentic AI frameworks are gaining attention across industries.

Rather than relying on static pipelines, modern systems are designed to operate in steps, respond to inputs, and adjust their behavior in response to changing conditions. As a result, businesses are moving toward more flexible architectures that support real-world operations instead of isolated tasks.

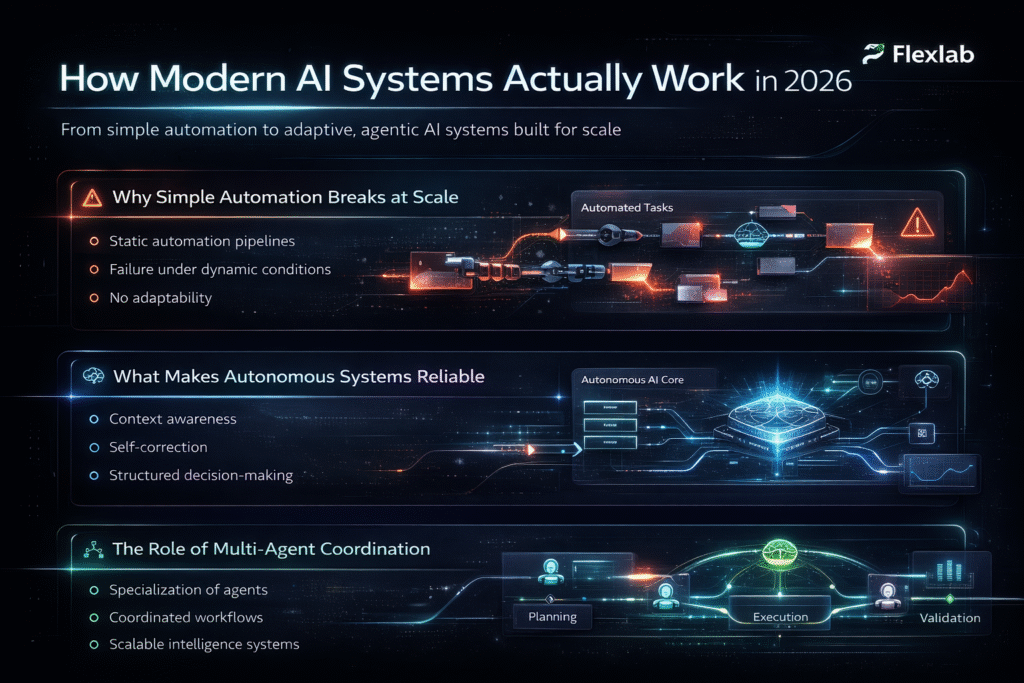

Why Simple Automation Breaks at Scale?

Early automation software worked well for repetitive tasks; however, it struggles when workflows become dynamic. Once processes involve multiple steps, changing inputs, or external dependencies, traditional setups start to fail. That’s the point where AI workflow automation starts moving beyond fixed rules. Instead of following fixed rules, systems now need to handle branching logic, interruptions, and real-time decisions.

For example, a workflow that processes customer requests may need to pause. In addition, it may require external data before continuing, retrieve external data, and resume based on new information. Without this flexibility, automation becomes fragile. As a result, it struggles to scale in real environments, limiting its ability to rank across real business environments.

What Makes an Autonomous System Reliable?

A reliable system doesn’t just execute tasks; it maintains context, recovers from errors, and continues operating without constant supervision. At this stage, the concept of an autonomous AI agent becomes essential.

These systems are designed to:

- retain memory across steps

- retry failed actions

- make decisions based on context

In many cases, a human in the loop is added to validate outputs and handle critical decisions. At the same time, reliability depends on how well these capabilities are implemented. If memory fails or decisions lack structure, the entire workflow can break. Therefore, strong design becomes more important than just model performance.

The Role of Coordination Across Agents

As systems grow more complex, a single agent is rarely enough. Instead, multiple agents handle specialized tasks. Different tasks often require separate components working together, which introduces the need for coordination.

In more complex systems, multi-agent orchestration plays a critical role. Instead of one system handling everything, multiple agents can specialize in planning, execution, and validation. For instance, one agent may gather data, another may process it, while a third may review the output before completion, especially in AI predictive analytics workflows.

However, coordination introduces its own challenges. If communication between agents is unclear or poorly structured, workflows can become inconsistent. That’s why modern frameworks focus heavily on managing these interactions efficiently.

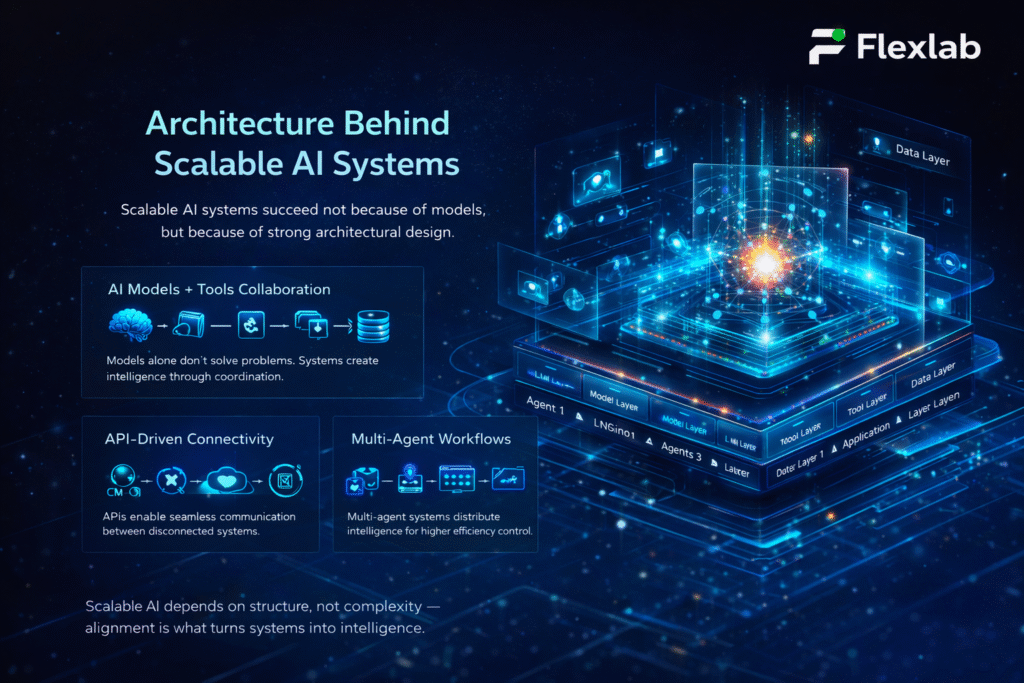

Architecture Behind Scalable AI Automation Systems

Most AI systems don’t fail because of bad models. They fail because the structure behind them can’t support how things actually run in real-world conditions. That’s where architecture makes the difference. As systems grow, they need to handle more data, more decisions, and more moving parts. Without a solid foundation, performance starts to decline, and small issues become bigger failures over time.

How AI Models and Tools Work Together

At the center of every system are AI models, but models alone don’t solve real problems. They need access to tools, data, and clear instructions to produce useful outcomes. Think of it this way: a model can generate answers, but it still needs context to act on them. That’s why modern systems combine models with tools that handle retrieval, execution, and validation.

However, when these components aren’t aligned, results become inconsistent. Therefore, proper system design becomes critical. A strong setup ensures each part works together rather than operating in isolation.

Connecting Systems Through APIs

An AI system cannot work alone. It needs to interact with databases, platforms, and external services, which is where api integration becomes essential. APIs (Application Programming Interfaces) allow different systems to communicate and exchange data without manual effort. For example, an AI workflow can pull customer data from a CRM, process it, and automatically send updates to another system.

In real-world environments, weak integrations quickly turn into delays and errors. If connections are slow or unreliable, the entire workflow is affected. For that reason, scalable systems depend on clean and efficient API connections.

Designing Multi-Agent Workflows

As systems become more advanced, they move beyond single flows and shift toward multi-agent workflows that divide tasks across specialized components. Instead of one system doing everything, responsibilities are split. One part gathers data, another processes it, and another reviews the output.

This approach improves efficiency and makes systems easier to manage. At the same time, it introduces coordination challenges. That means more components also require better coordination. If communication isn’t clear, workflows can become messy and harder to control. Strong design keeps everything structured without adding unnecessary complexity.

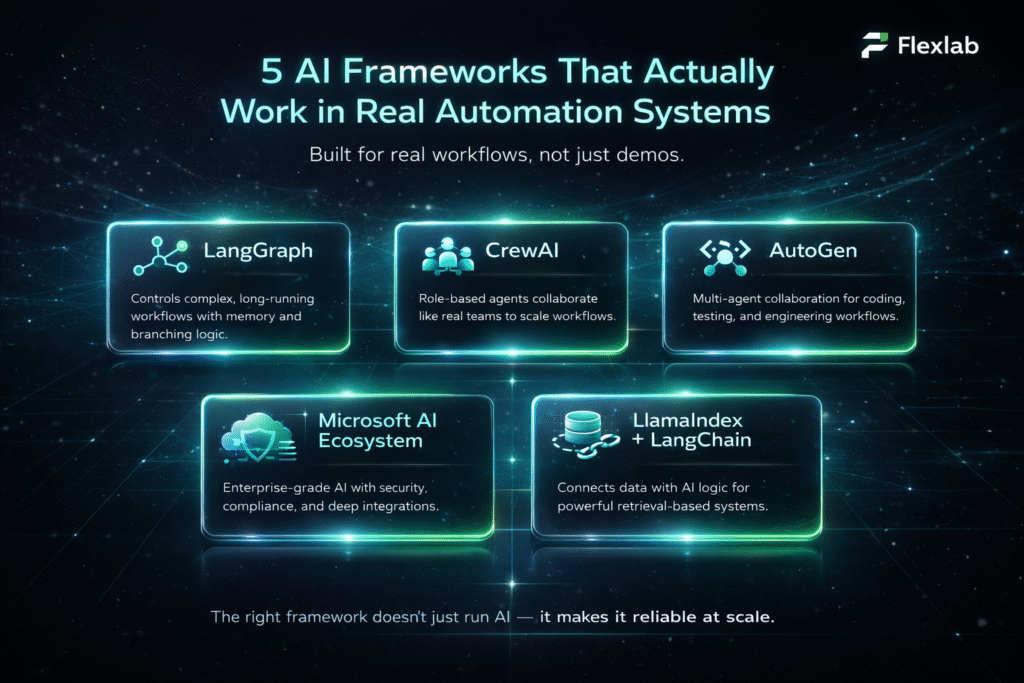

5 Best AI Frameworks for Automation That Work in Real Systems (2026)

There’s no shortage of AI tools in 2026. The real question is which ones can handle real workflows without breaking under pressure. Some frameworks look great in demos; however, they struggle when workflows become unpredictable or prolonged. The ones below stand out because teams are using them in actual systems, not just in experiments.

1. LangGraph for Complex Workflow Control

LangGraph becomes a strong option for AI agent automation. It can easily handle multiple steps, decisions, and delays; in the workflows, simple pipelines fall apart.

Instead of forcing everything into a linear flow, it allows systems to branch, pause, and resume based on conditions. That makes it useful for operations such as document review pipelines or internal approval systems.

One of its biggest strengths is how it handles long-running processes. Workflows don’t lose context, even when they stop and restart. Teams often spend more time designing workflows up front because flexibility introduces complexity. Teams often need solid engineering support to design flows correctly. If the structure isn’t planned well, complexity can grow quickly.

For instance, in insurance claim processing, workflows often pause for document verification, request additional data, and resume later. Systems like this handle those interruptions without losing context.

2. CrewAI for Role-Based Intelligent Systems

Some workflows feel less like automation and more like teamwork. That’s exactly the idea behind CrewAI, where multiple AI assistants take on specific roles within a system.

For example, in a sales workflow, one agent can handle research, another drafts outreach, while a third reviews messaging before it goes out. This division makes processes feel more natural and easier to scale.

The advantage here is speed. Teams can set up role-based systems without building everything from scratch. As workflows expand, maintaining consistency across multiple agents becomes more challenging, especially when roles start overlapping. As the number of agents grows, keeping outputs consistent requires careful coordination.

In sales operations, teams use role-based agents where one researches prospects, another drafts outreach, and a third reviews messaging before sending.

3. AutoGen for Engineering and Dev Workflows

When it comes to development-heavy environments, AutoGen stands out for building systems around LLM agents that can collaborate on tasks.

It’s widely used in scenarios like:

- code generation

- debugging workflows

- automated testing pipelines

Instead of a single system doing everything, multiple agents can write, review, and refine outputs together. The strength here is flexibility, especially for engineering teams. It fits naturally into development workflows and improves productivity.

In practice, performance can drop if agent interactions are not clearly defined, since loops and retries can quickly increase resource usage. Without clear boundaries, agent interactions may create unnecessary loops or delays.

Engineering teams often apply this in pull request workflows, where one agent writes code, another tests it, and a third suggests improvements.

4. Microsoft Ecosystem for Enterprise Systems

For organizations already working within Microsoft environments, the Microsoft agent framework ecosystem offers a structured way to build AI systems.

Tools like Semantic Kernel are designed to integrate directly with enterprise platforms, making it easier to connect AI workflows with existing infrastructure. This works well in regulated environments where control, compliance, and security are critical.

This approach works best inside Microsoft-heavy environments, although it can feel restrictive when teams need cross-platform flexibility. These tools are powerful within their ecosystem, but less adaptable outside of it. Teams using mixed tech stacks may find limitations when trying to expand.

5. LlamaIndex & LangChain for Data + Logic Layers

When data is the hardest part of the problem, frameworks like LlamaIndex and LangChain become essential, especially for systems built on retrieval augmented generation rag.

They focus on connecting AI systems to structured and unstructured data sources, making them ideal for:

- internal knowledge systems

- support copilots

- document-driven workflows

The biggest advantage is how quickly teams can connect data to models and start generating useful outputs. As data volume increases, performance tuning becomes necessary; response quality and speed may decline. Without proper tuning, performance can drop as data complexity increases.

Comparison of the Best AI Frameworks for Automation in 2026

Here’s a quick comparison of the most effective AI frameworks for automation based on real-world performance, strengths, and limitations.

| Framework | Best Use Case | Strength Area | Where It Struggles |

| LangGraph | Complex, long workflows | State + branching | Setup complexity |

| CrewAI | Role-based automation | Fast multi-agent setup | Consistency at scale |

| AutoGen | Dev + coding workflows | Agent collaboration | Resource-heavy loops |

| Microsoft Stack | Enterprise systems | Security + integration | Less flexible outside ecosystem |

| LlamaIndex + LangChain | Data-driven systems | Data connectivity | Needs optimization |

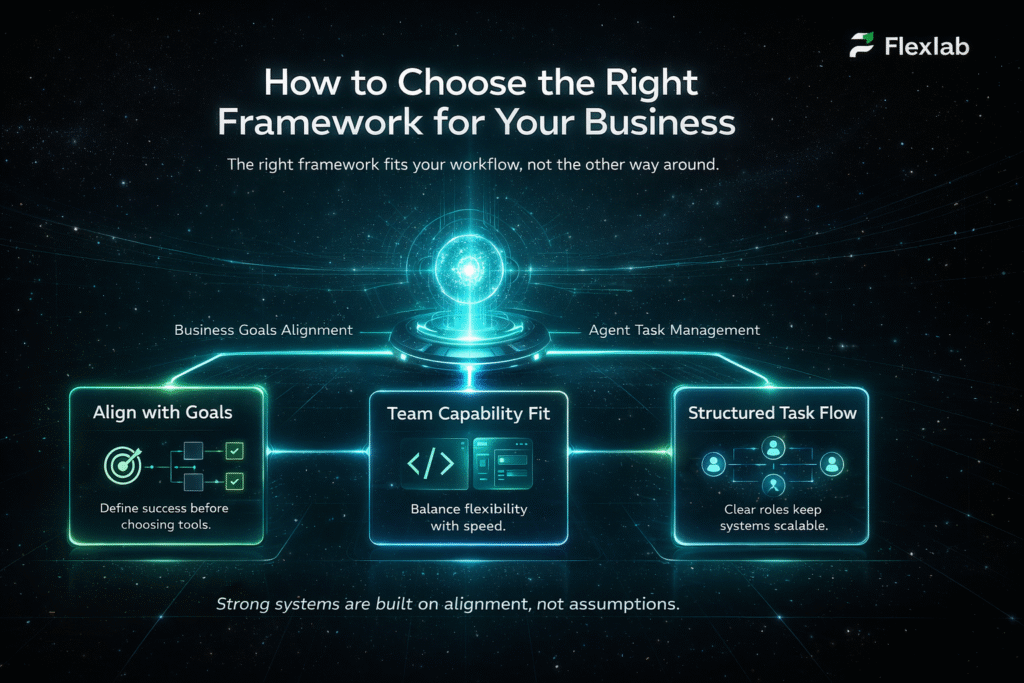

How to Choose the Right AI Framework for Automation

Choosing the right framework is about knowing what actually fits your workflow, your team, and the way your system needs to operate. Many teams make the mistake of chasing features; however, what really matters is how well a framework performs under real conditions. The right choice should simplify your process, not add unnecessary complexity.

Aligning Frameworks with Business Goals

Every system should start with a clear purpose. In real-world AI in business environments, workflows vary widely depending on the industry and use case. For example, a customer support system has very different requirements compared to an internal operations workflow. One may prioritize speed and responsiveness, while the other focuses on accuracy and validation.

Moreover, the first step is simple: define what success looks like. Once that’s clear, it becomes easier to match a framework that supports those goals instead of forcing a mismatch.

Developer vs No-Code Decisions

The ideal technical depth depends on each team’s distinct goals and expertise. Some prefer flexibility, while others need speed and simplicity. This is where AI engineering decisions come into play.

Developer-focused frameworks offer more control and customization. They allow teams to design complex workflows, integrate multiple systems, and fine-tune performance. On the other hand, no-code or low-code tools help teams move faster with less technical overhead. They’re useful for quick deployments; however, they may become limiting as systems grow more complex. The right balance depends on your team’s capabilities and long-term goals.

Managing Tasks Across Agents

As workflows become more advanced, managing coordination becomes just as important as building the system itself. This is where autonomous AI agents’ task management starts to matter. Instead of treating tasks as isolated steps, modern systems break them into smaller responsibilities handled by different agents. This improves efficiency and allows systems to scale more naturally.

However, without proper structure, task management can become disorganized and messy. Clear roles, defined responsibilities, and controlled communication are essential to keep everything running smoothly.

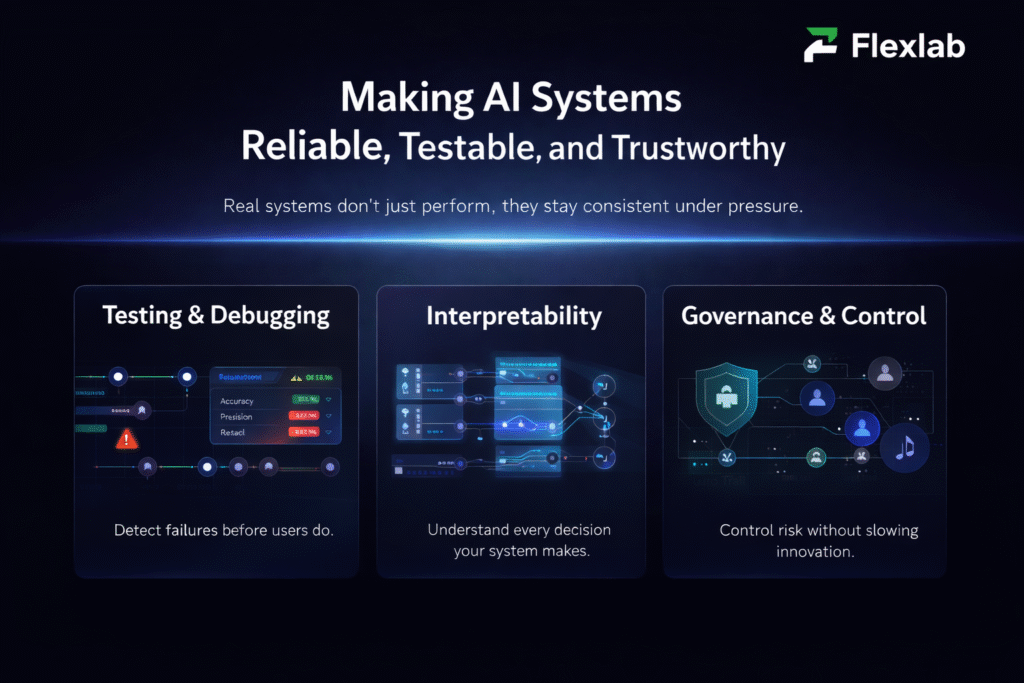

Making AI Automation Systems Reliable, Testable, and Trustworthy

Building an AI system is only half the job. The real challenge begins once it runs in real environments, where inputs change, edge cases arise, and unexpected behavior emerges.

Furthermore, reliability isn’t just about performance; it’s about consistency over time. Systems need to be tested, monitored, and controlled. In addition, they must adapt to changing inputs. So they don’t break when conditions shift.

Testing and Debugging AI Systems

Unlike traditional software, AI systems don’t always behave the same way twice. That makes testing more complex and far more important. This is the reason AI testing tools come into play. They enable teams to track outputs, evaluate performance, and identify issues that move in the wrong direction.

For example, a system might perform well during initial testing but fail when exposed to real user inputs. Without proper testing, these issues often go unnoticed. As a result, they affect real workflows later. As a result, strong testing practices are essential to maintain stability and avoid unexpected failures.

Understanding Model Behavior

Even when systems work, understanding why they produce certain outputs is just as important. This is where AI interpretability becomes critical. Teams need visibility into how decisions are made, especially in workflows that affect customers or operations. Without that clarity, it becomes difficult to trust or improve the system.

At the same time, complete transparency isn’t always easy to achieve. Many models operate in ways that are not fully explainable. Therefore, improving interpretability becomes essential, creating challenges for debugging and optimization. Improvement in interpretability enables teams to make better decisions and refine system behavior over time.

Governance and Risk Control

As AI systems take on more responsibility, controlling risk becomes a priority. That’s where AI governance tools empower organizations to maintain oversight.

These tools support:

- audit trails

- approval workflows

- access control

They ensure that systems operate within defined rules, especially in environments where compliance matters. However, Governance should not slow down innovation. Instead, it should support safe and scalable growth. The goal is to create guardrails that keep systems reliable while still allowing them to evolve.

Turning AI Frameworks into Real-World Systems with Flexlab

Exploring AI frameworks is only the starting point. The real challenge begins when you try to turn them into stable, scalable, production-ready systems that actually work in real business environments.

At Flexlab, we don’t just experiment with AI. We design, engineer, and deploy production-grade AI automation systems built for real-world execution. From multi-agent orchestration and API integrations to enterprise workflow automation, we focus on building solutions that are reliable, scalable, and business-ready from day one.

If your goal is to move beyond testing and implement AI that delivers measurable business impact, this is the right time to act with Flexlab.

Ready to Build AI Automation That Actually Works?

📞 Book a FREE Consultation Call: +1 (416) 477-9616

📧 Email Us: info@flexlab.io

If you want to see how this works in real scenarios, you can explore our portfolio or read practical insights on our blog. In addition, our services page explains how we design and implement AI systems step by step for real business use cases. If you’re ready to move forward, let’s contact us to get started. You can also stay updated on LinkedIn, where we share real-world AI strategies and implementation insights that businesses are using today.

Keep Exploring:

Final Takeaways on AI Frameworks for Automation in 2026

Choosing the right framework isn’t about chasing trends; instead, it’s about building systems that hold up under real conditions. The difference shows up when workflows run on a daily basis, not just in demos. Therefore, the best approach is to focus on stability, adaptability… In the long run, this ensures sustainable growth and a clear structure from the start.

At this point, teams that succeed treat frameworks as long-term infrastructure, not a short-term tool. For example, selecting a system that fits your workflow today reduces rework later. In addition, strong foundations make it easier to scale without breaking performance.

Ultimately, progress comes from execution. Start with one workflow, measure results, and improve step by step. Over time, that approach turns experiments into reliable systems that actually deliver business value.

What are the best AI frameworks for automation in 2026?

The best AI frameworks in 2026 depend on your use case and workflow complexity. Some are designed for multi-step automation, while others work better for fast deployment. Instead of following trends, most businesses choose frameworks based on how well they fit their systems. This approach helps improve performance and makes scaling easier over time.

Do businesses really need AI frameworks for automation?

AI frameworks become important when automation moves beyond simple tasks. Basic tools can handle repetitive work; however, they often fail in dynamic environments. Frameworks provide structure, allowing systems to adapt, make decisions, and run reliably. For this reason, most growing businesses rely on them for long-term automation.

How do I choose the right AI framework for my business?

Choosing the right AI framework starts with understanding your workflow and goals. If your processes involve multiple steps or integrations, you’ll need a more flexible solution. It also depends on your team’s technical skills and future scaling plans. In most cases, starting small and testing one workflow is the safest way to decide.